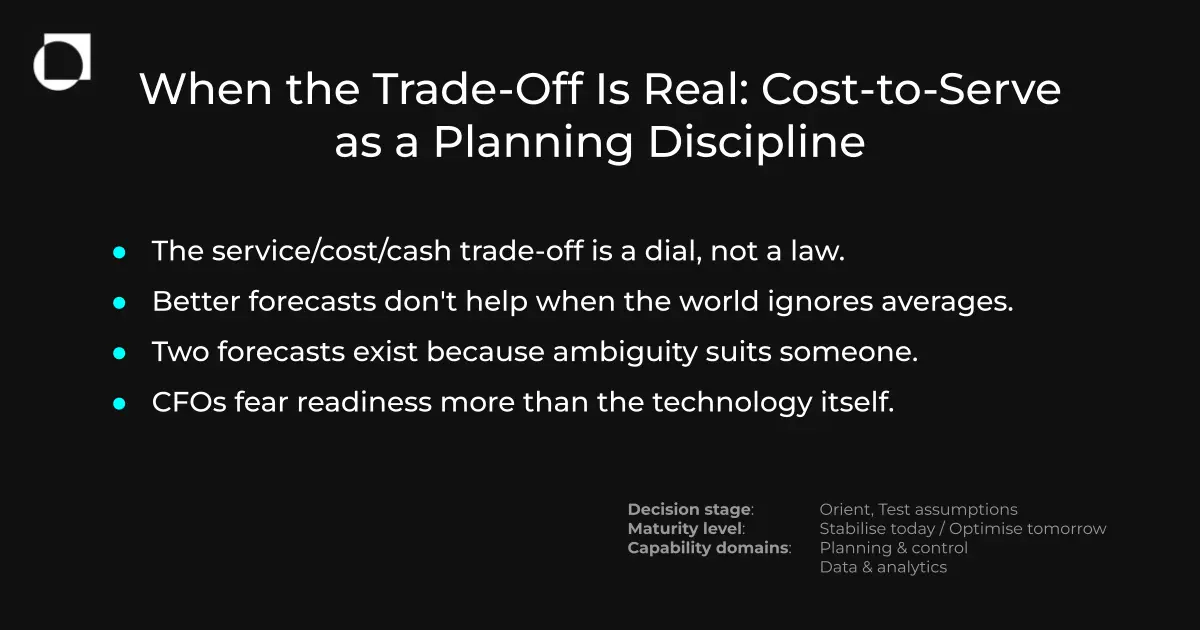

A previous discussion on this site posed the question of whether the service/cost/cash trade-off is an operational law or an artefact of how planning decisions get made. The theoretical case for the latter is reasonably well established. What is harder to assess is whether it holds up when you put it in front of practitioners who are living inside that tension right now, in volatile conditions, with imperfect data and finance departments that are not always pulling in the same direction.

A recent session hosted by Uzair Bawany of Oii.ai, a supply chain optimisation specialist, brought together senior planning and supply chain leaders from pharmaceutical, food and beverage, industrial manufacturing, and multi-sector distribution. The discussion was held under Chatham House Rule. What follows draws on the themes that emerged, with Uzair's reflections woven in as the conversation developed.

The triangle is real, but it moves

Several participants described managing what one called "the triangle of tension" between service levels, working capital and margin. That framing will be familiar. What was more interesting was the observation that the triangle's dominant constraint shifts over time, and that organisations often fail to notice when it has shifted.

One participant described spending the previous two years under significant pressure to reduce working capital, with inventory reduction the primary lens. Service was protected but cash was clearly in the lead. In the months before the session, that had changed. Geopolitical disruption had moved service back to the front, and the business had quietly given its planning function more latitude to use working capital as a tool to protect availability. Full container utilisation, previously not a meaningful consideration given low volumes in that particular lane, was now a live optimisation question.

The implication was not that the triangle had been resolved. It was that the dominant constraint was being managed more consciously, and that this consciousness had opened up new conversations between planning and logistics that had not been happening before.

What none of the participants had, consistently, was a reliable way to calculate the bottom-line impact of shifting that balance. Intuition and experience were doing a lot of the work that modelling and simulation were not.

The forecast accuracy trap

A participant working in the electronics sector raised something worth dwelling on. A persistent internal expectation exists in his organisation that getting forecast accuracy to some industry-standard threshold will resolve the core planning problems. His view is that this is simply not true in a sector where customer requirements are volatile and lead times are short. The question is whether the organisation focuses everything on forecast accuracy, or accepts that the right response is a more agile supply chain, shorter manufacturing lead times, and a different relationship with buffer stock.

The difficulty is that each of those alternatives carries a cost. Shorter manufacturing lead times may require holding more components. Increased buffer stock consumes working capital. The trade-off cannot be avoided by improving the forecast; it can only be made more or less deliberately.

This connects directly to a pattern identified in earlier writing on this site: planning keeps failing not because of poor execution but because organisations are applying optimisation logic designed for a stable world to a world that is no longer stable. Forecast accuracy initiatives are a version of that same misapplication. They improve the quality of a single-point estimate in an environment where the value of any single-point estimate is structurally limited.

Uzair's reflection on this was direct. Using simulation to model specific scenarios, including the cost impact of different lead time assumptions, does not eliminate uncertainty. What it does is make the trade-off explicit rather than implicit. The choice between a 60-day and a 70-day lead time assumption becomes a calculable decision rather than a judgement call absorbed somewhere in the planning cycle.

Fragmented data as a structural constraint

One participant operates across a business that has grown through acquisition, with no common ERP and significant variation in how data is maintained and defined across regions. The practical consequence is that trade-off modelling has to be done manually, using what were described, with a degree of resignation, as "old school methods." The data visibility to simulate what-if scenarios across the network does not yet exist in any integrated form.

This is not an unusual situation. An earlier discussion on this site examined the specific challenge of supply chain leaders becoming accountable for data they do not control, and the gap between data that exists somewhere in the organisation and data that is usable at the point of decision. The cost-to-serve context makes that gap more consequential. If you cannot see your cost-to-serve position across your network, you cannot optimise it. You can only react to it after the fact.

A question submitted by a participant who was unable to attend put this concretely: how do you validate a SKU catalogue as a prerequisite for meaningful cost-to-serve analysis? The answer from practitioners who had been through the process was that good data, sufficient for the specific use case, tends to be more valuable than perfect data delayed by months of harmonisation effort. One participant described a three to four week data cleaning process that got them to a workable position, having used tooling to accelerate what would otherwise have been a laborious manual exercise.

Uzair's observation was that the "garbage in, garbage out" assumption increasingly understates what modern tooling can do with messy inputs. The practical floor for what constitutes usable data has shifted. That does not remove the need for data governance, but it does change the calculus of when it is worth starting.

The finance relationship: one forecast or two?

One of the sharpest practical tensions described in the session was the finance and planning disconnect that persists in organisations still running S&OP rather than integrated business planning. In one participant's organisation, the finance team maintains a separate demand number, partly because commercial teams have historically inflated forecasts to secure additional inventory. Planning then cuts inventory in response to what it correctly reads as an unreliable signal, which frustrates the commercial side, which inflates more.

The participant described a shift in progress: a move towards IBP that would derive the finance number from the same demand inputs used in planning, eliminating the parallel forecast. The change required the CFO and CEO to be on board before anyone else would follow. The observation, made without complaint, was simply that senior sponsorship turned out to be the enabling condition rather than the business case itself.

This is worth noting for anyone who has experienced a similar dynamic. The analytical argument for a single integrated number is not hard to make. The resistance is rarely logical. It tends to come from teams that have found ways to use the current ambiguity to their advantage, and who need to be convinced that losing that advantage is in their interest.

Business case pressure and the CFO conversation

The session closed with a reflection on the soft side of planning transformation, and particularly on why so many initiatives stall before they properly start.

One participant's experience was that senior stakeholders do not necessarily need to be persuaded that AI or simulation technology is valuable. They are under enough shareholder pressure that the appetite is there. What concerns them is whether the initiative will deliver something tangible within the financial year, or whether it will produce a proof of concept that recommends investing in three years' time.

The framing that had worked in practice was a proof of value model, offered without commercial commitment, that demonstrated specific impact within a defined environment before any broader adoption discussion began. The argument for that approach is not just commercial. It addresses the specific fear that is most likely to slow a CFO down, which is not scepticism about the technology but uncertainty about whether the organisation is ready to absorb it.

Uzair's reflection was consistent with this. The old model of substantial upfront investment with deferred returns is no longer the norm. The expectation from buyers should be that value is demonstrated before commitment is made, and that return on investment is measurable in weeks rather than years.

This session was part of BestPractice.Club's ongoing practitioner inquiry series. It was hosted by Oii.ai, whose simulation-based planning optimisation platform was discussed in the context of the challenges raised. The next in-person meeting, on 29 April in London, includes a session on making the business case for planning investment decisions.